Intel has finally shipped the GPU everyone in the AI community has been quietly waiting for — a "Big Battlemage" with real memory capacity. The Arc Pro B70 launched this week, and it's Intel's most powerful Xe2-architecture GPU to date: 32 Xe2 cores, 32GB of GDDR6 memory, and an Intel reference design priced at $949.

It's not for gaming. Intel is being explicit about that. The Arc Pro B70 is designed from the ground up for AI inference, professional creative workloads, and multi-GPU rack deployments where memory capacity matters more than raw gaming frame rates.

What Makes It Interesting for AI

The headline spec is the memory. At 32GB of GDDR6 on a 256-bit bus, the B70 can hold genuinely large language models in VRAM. Most consumer GPUs — including the RTX 4090 — top out at 24GB, which is tight for models above 13B parameters at full precision, or 70B+ models even when quantized.

32GB isn't the ceiling for AI work by any means, but it's a meaningful step up for developers who want to run larger open-weight models locally without splitting them across multiple cards. The Arc Pro B70 supports multi-GPU configurations for rack-scale deployments, letting organizations chain multiple cards for larger workloads.

Intel also launched a smaller sibling, the Arc Pro B65, with 20 Xe2 cores. The B65 is only available through partner designs rather than an Intel reference card, but its memory configuration should appeal to more cost-sensitive workloads.

Why Intel Is Going After AI Hardware

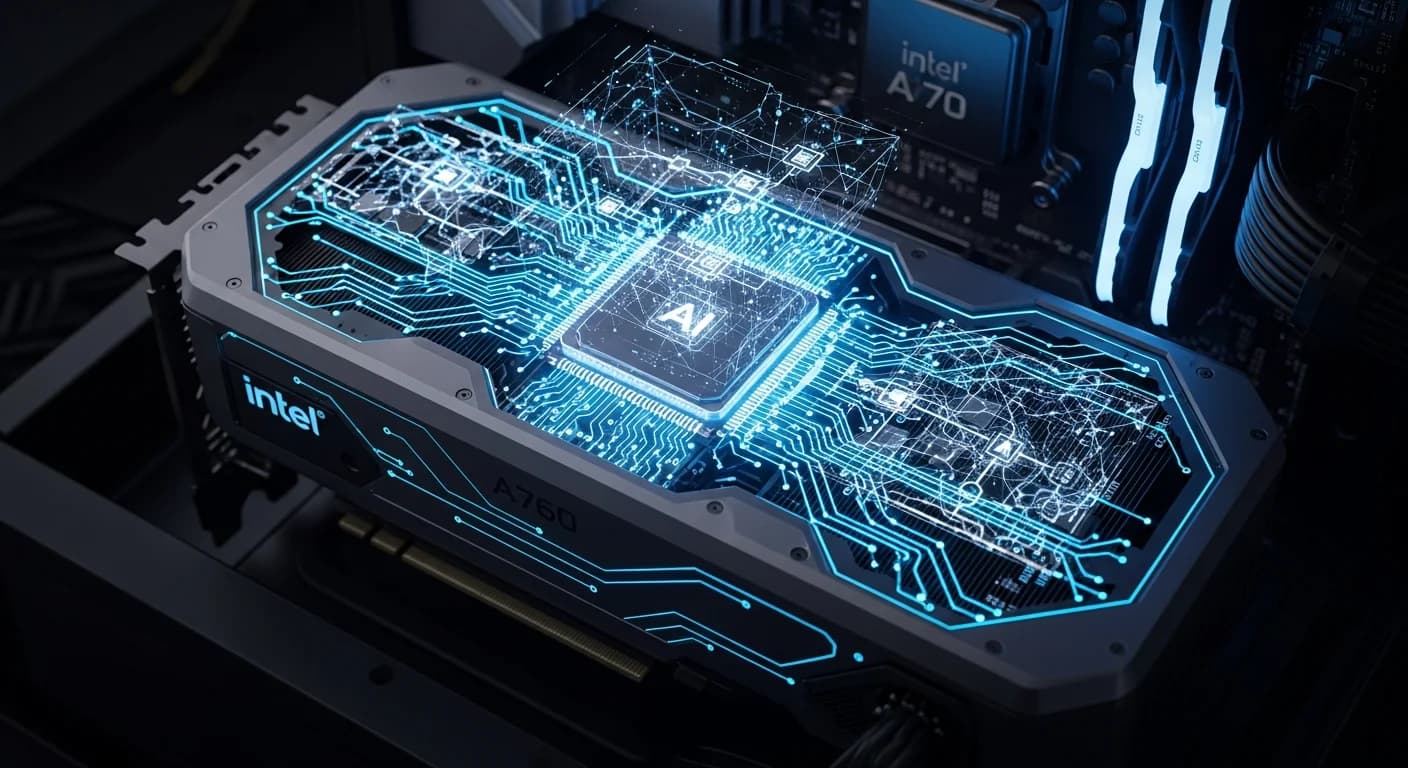

Intel's consumer GPU journey has been rough. The Arc A-series launched in 2022 with driver instability and performance inconsistencies that made adoption difficult. The Xe2-based Arc B580 released in late 2024 was better received, but Intel has never posed a serious threat to NVIDIA's gaming dominance.

The AI market is a different story. NVIDIA's professional AI cards — the A100, H100, H200 — command enormous premiums, and even its RTX Pro 6000 starts at $2,000+. The gap between "consumer GPU that happens to run AI" and "enterprise AI hardware" is wide, and Intel is planting its flag in the middle of it.

The Arc Pro B70 at $949 puts 32GB of VRAM within reach of developers, small labs, and startups that can't justify enterprise GPU prices but need more than what consumer cards offer.

The Software Question

Hardware is only half the story. Intel has historically struggled with software ecosystem. CUDA, NVIDIA's programming platform, has a decade of optimization work embedded in nearly every AI framework. AMD's ROCm has made progress but still lags. Intel's oneAPI and XPU backend support in PyTorch, TensorFlow, and other frameworks have improved significantly, but "improved" is different from "at parity."

Intel claims the Arc Pro series is optimized for popular AI frameworks and supports industry-standard software certifications required for professional workloads. The real test will be how quickly the developer community adopts it — and whether Intel can keep pace with software updates as AI model architectures continue evolving.

Positioning Against the Market

NVIDIA still dominates AI compute from cloud to workstation. AMD is competing hard with the MI300X at the high end. Intel's play is the middle ground: more memory than consumer cards, dramatically lower price than enterprise cards, and a bet that local AI inference demand keeps growing.

The timing isn't bad. As open-weight models improve and developers push toward running AI locally rather than via API, the demand for mid-tier AI hardware with serious memory capacity is real. The question is whether Intel can turn a good spec sheet into real developer adoption — and the answer to that will depend on software as much as silicon.

Partner-designed B65 Pro cards are available now. The B70 Intel reference design starts at $949, with partner variants varying in price.