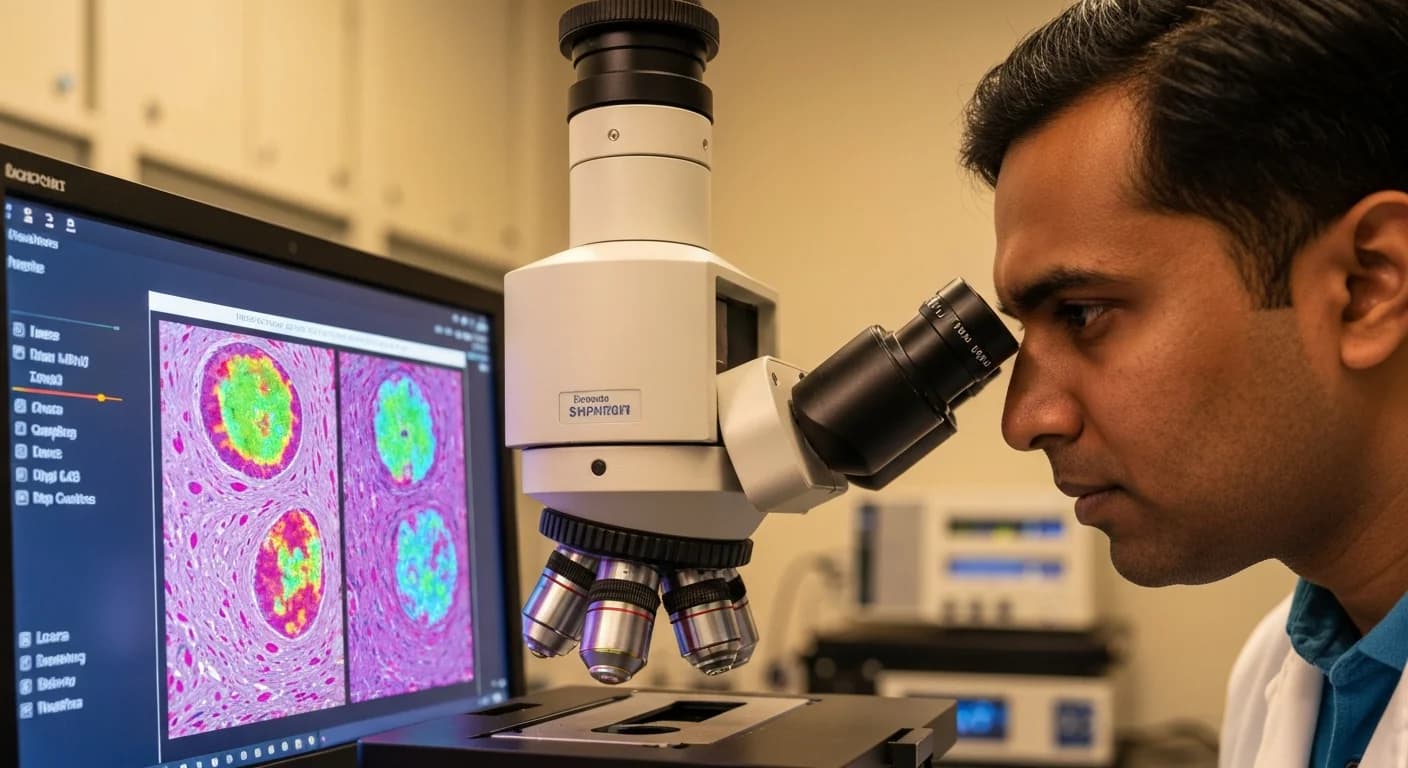

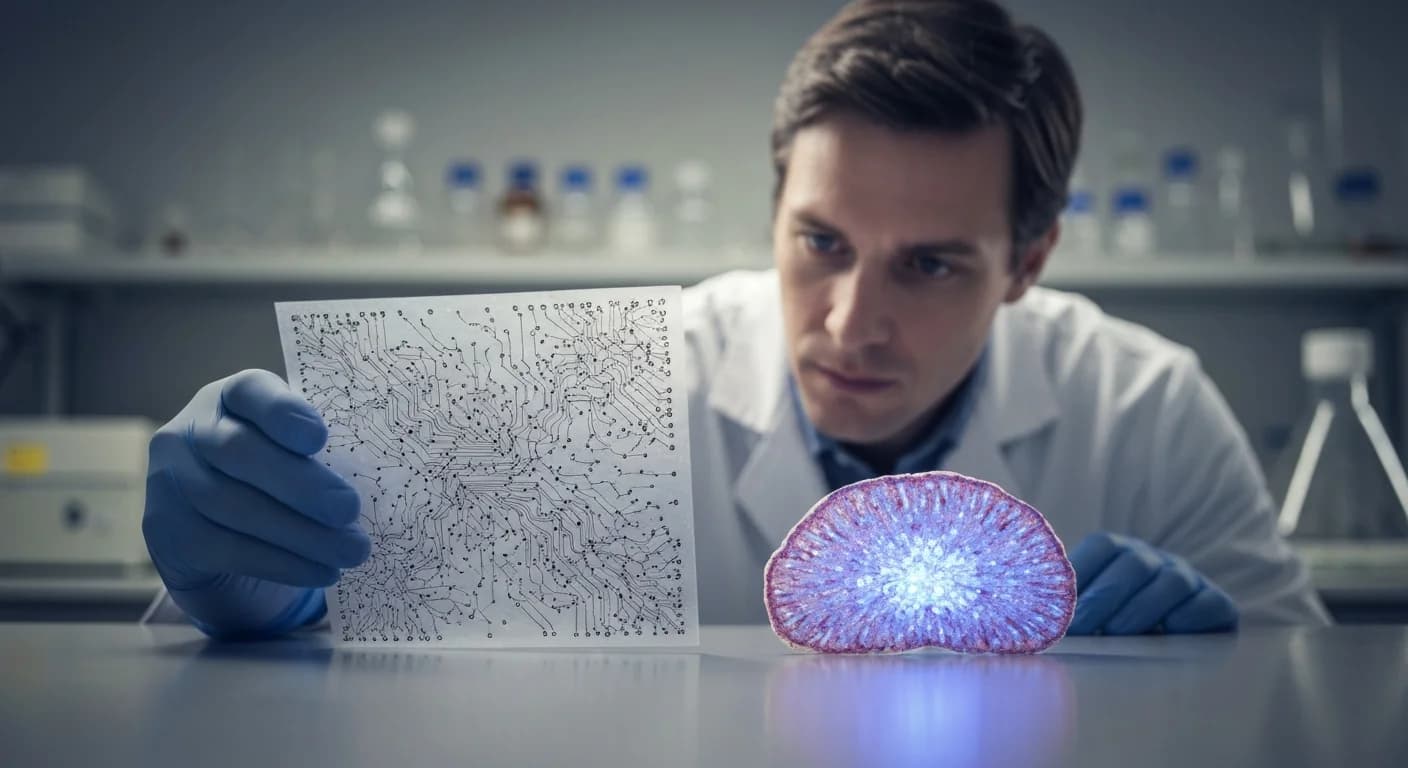

Northwestern's Printed Artificial Neurons Talk Back to Living Brain Cells

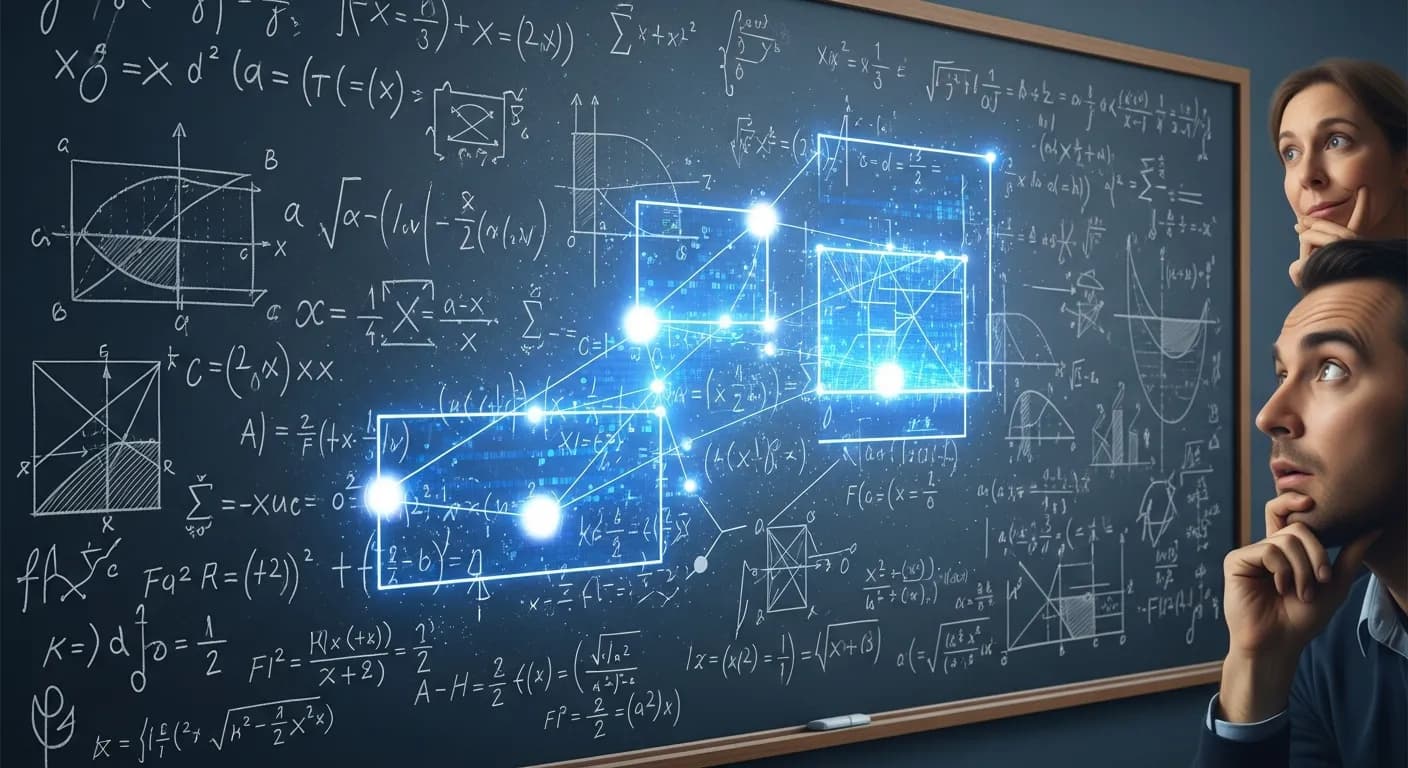

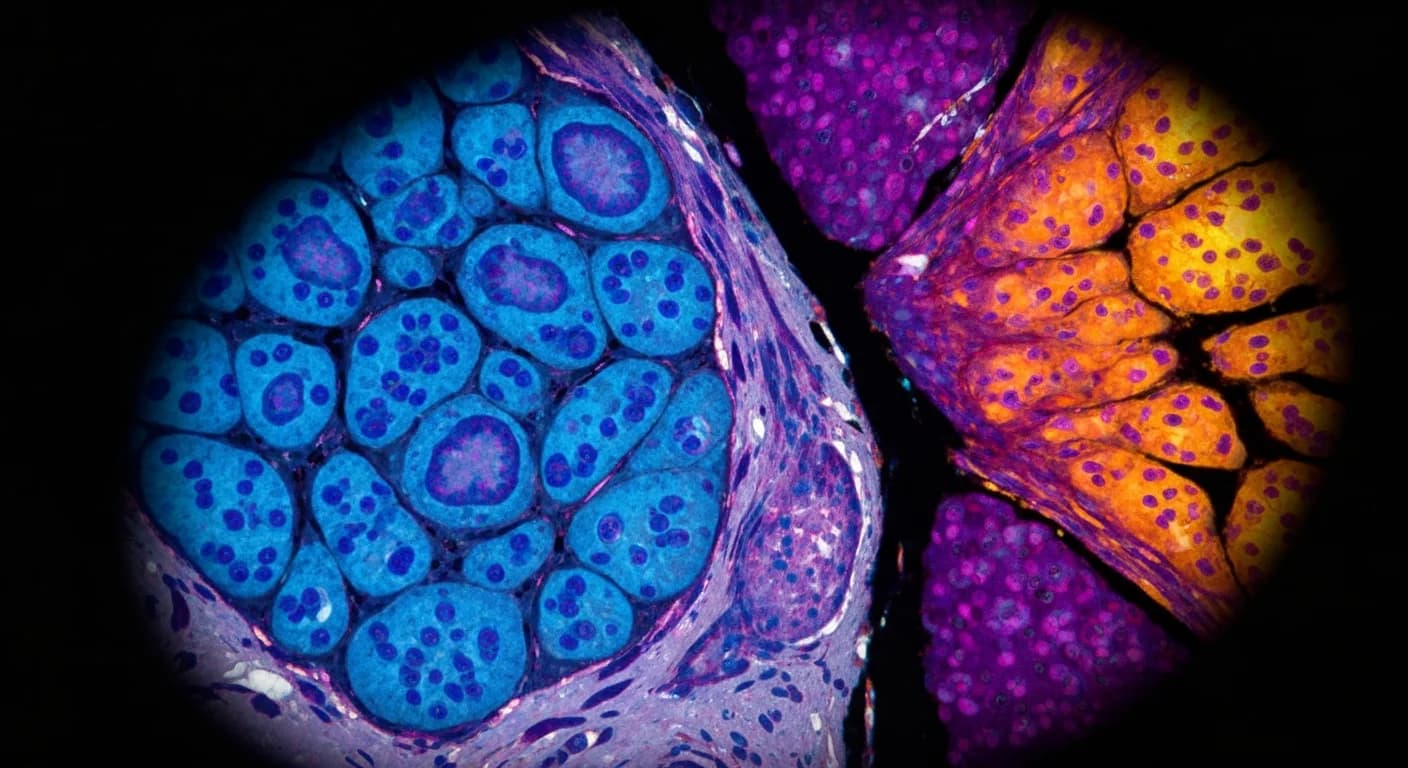

Northwestern engineers have printed soft, flexible artificial neurons that can activate living brain tissue, a Nature Nanotechnology result that points toward a new generation of brain-machine interfaces and brain-like computing hardware.