The Allen Institute for AI (AI2) has released OLMo Hybrid, a 7B-parameter language model that combines transformer attention with linear recurrent neural network layers — and the results suggest hybrid architectures may represent the next major leap in model efficiency.

The fully open release includes model weights, training code, intermediate checkpoints, and complete training logs, maintaining AI2's position as the leading advocate for transparent AI research.

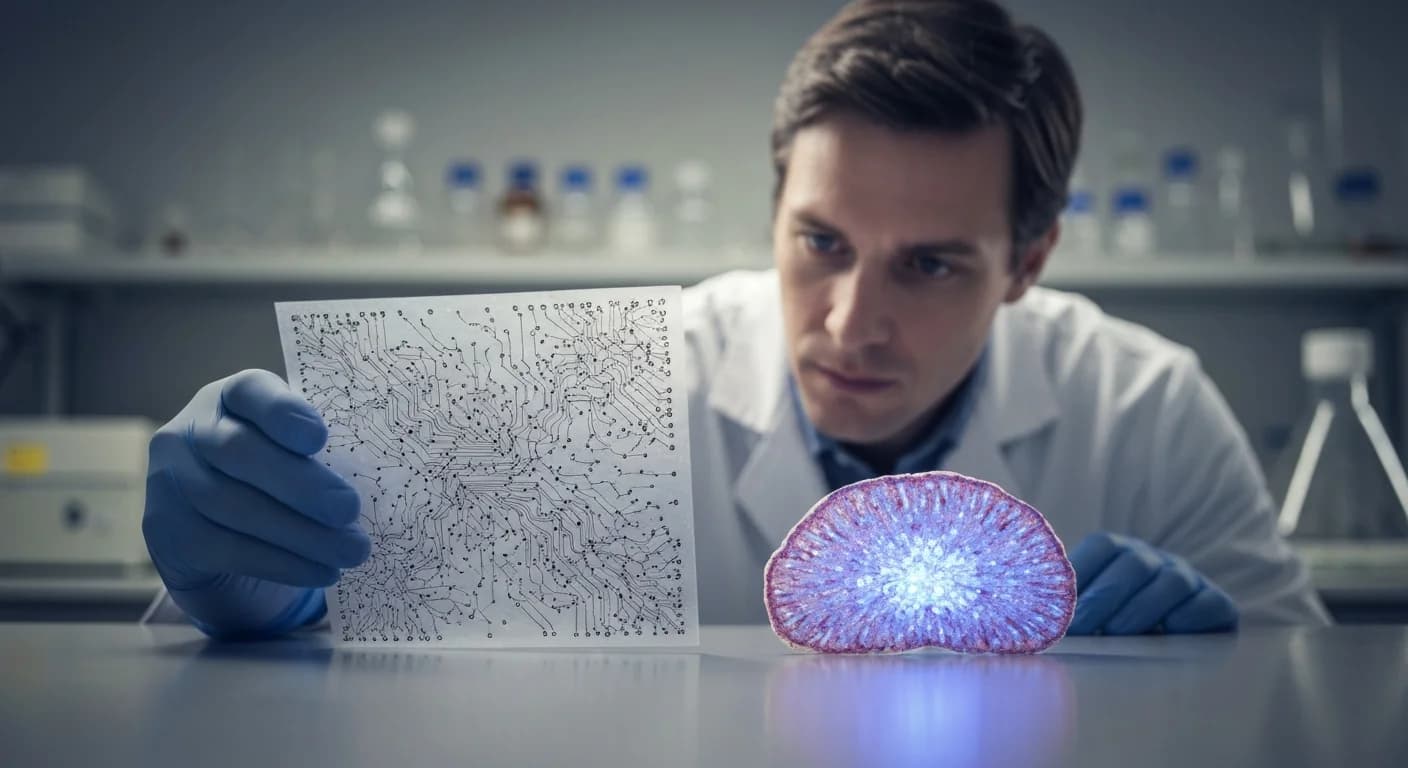

The Hybrid Approach

OLMo Hybrid interleaves standard transformer layers with Gated DeltaNet layers, a modern linear RNN design that remains parallelizable during training while offering expressive state dynamics. The core insight is that each architecture brings complementary strengths: transformers excel at recalling precise details from earlier in a sequence, while recurrent layers efficiently track evolving state across long contexts.

By combining both in a single model, OLMo Hybrid achieves something neither architecture delivers alone — strong performance with dramatically less training data.

Dramatic Efficiency Gains

The headline result is striking. On MMLU, the widely used benchmark for general knowledge and reasoning, OLMo Hybrid reaches the same accuracy as OLMo 3 while using 49% fewer training tokens. That translates to roughly double the data efficiency.

After mid-training, OLMo Hybrid outperforms OLMo 3 across all primary evaluation domains. And scaling-law analysis from AI2's research team predicts the token-savings factor grows with model size, suggesting that larger hybrid models could see even greater efficiency advantages.

Training at Scale

The model was trained on 512 NVIDIA Blackwell GPUs across 3 trillion tokens, a substantial compute investment that nonetheless demonstrates the architecture's practical viability at scale. AI2 partnered with Lambda for compute infrastructure, and the collaboration produced detailed open metrics throughout the training process.

AI2 is releasing base, supervised fine-tuning (SFT), and direct preference optimization (DPO) stage models, giving researchers and developers multiple entry points for building on the work.

Why This Matters

The efficiency implications extend beyond academic benchmarks. If hybrid architectures consistently deliver comparable performance with half the training data, the economics of training frontier models shift meaningfully. Smaller organizations and research labs that cannot afford trillion-token training runs could produce competitive models with more modest data budgets.

The architecture also shows promise for long-context applications. The recurrent layers handle evolving state more efficiently than pure attention at extended sequence lengths, potentially reducing the inference cost of processing long documents and conversations.

As the AI field debates whether scaling laws are hitting diminishing returns, OLMo Hybrid suggests an alternative path forward: not just more data and compute, but smarter architectures that extract more capability from every training token.

Understand the Architecture

Want to know what transformers actually are and why this hybrid approach matters? FreeLibrary's free book How AI Actually Works explains the transformer architecture without the math, plus covers how models are trained and what open source vs closed source means in AI.