The Problem: AI's Insatiable Energy Appetite

Data centers globally consumed an estimated 415 terawatt hours of power in 2024 — roughly 1.5% of the world's total electricity — and that figure is expected to double by 2030. Against this backdrop, a team at Tufts University has demonstrated that smarter architecture can deliver dramatic efficiency gains without sacrificing performance.

The research, led by Professor Matthias Scheutz at the Tufts School of Engineering along with co-authors Timothy Duggan, Pierrick Lorang, and Hong Lu, was published on arXiv and is set to be presented at the IEEE International Conference on Robotics and Automation (ICRA) in Vienna in June 2026.

How It Works: Symbolic Reasoning Meets Neural Networks

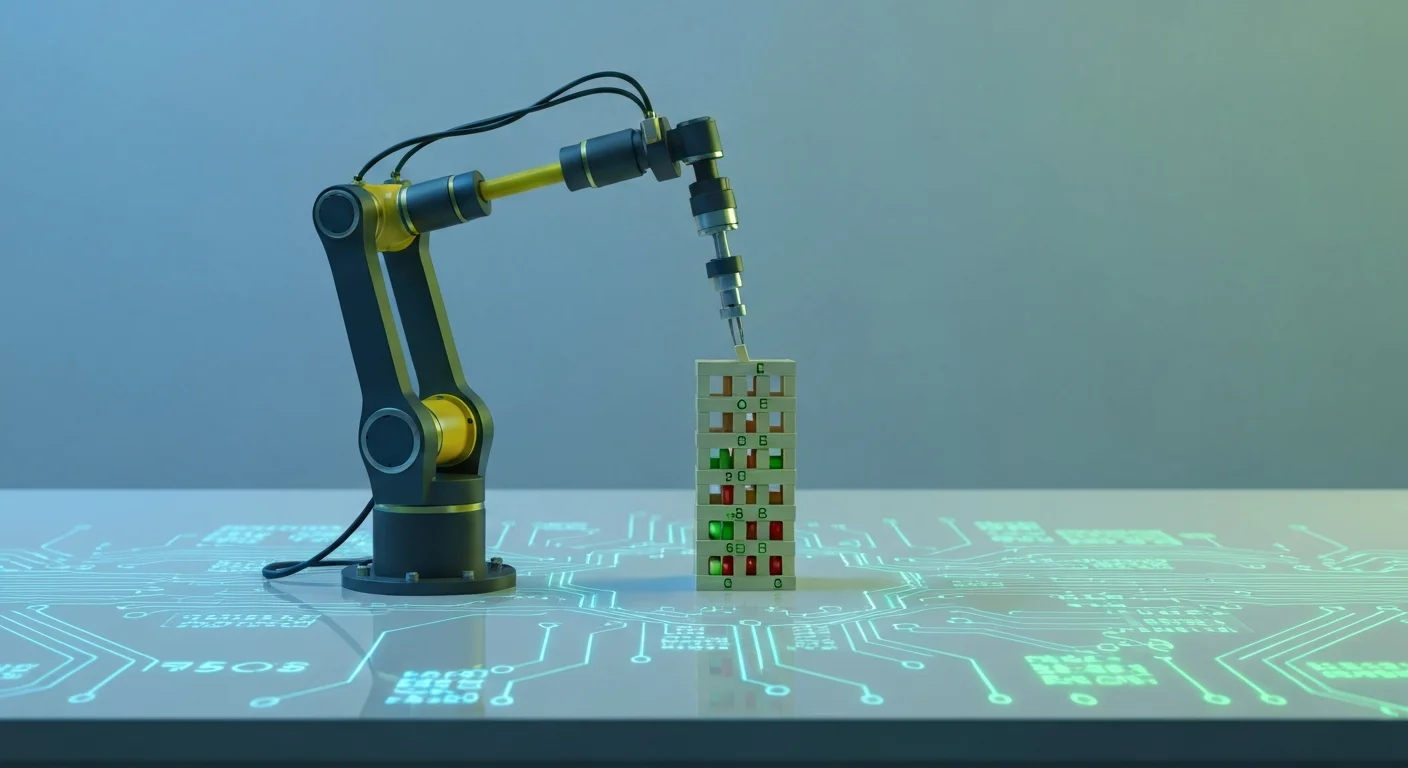

The core innovation is a hybrid "neuro-symbolic" approach to Vision-Language-Action (VLA) models — the systems that allow robots to process visual input and translate it into physical movements. Standard VLA models rely on massive datasets and brute-force pattern recognition, consuming enormous compute resources through trial and error.

The Tufts team took a different path. Their system layers symbolic reasoning — the kind of rule-based logic humans use for planning — on top of neural network perception. Rather than learning purely from data, the model can apply logical rules that constrain its search space.

"A neuro-symbolic VLA can apply rules that limit the amount of trial and error during learning and get to a solution much faster," said Professor Scheutz.

The Numbers Are Striking

On the Tower of Hanoi puzzle, a classic sequential reasoning benchmark, the results were stark:

- Training energy: 99% reduction compared to standard VLA models (from over 36 hours down to just 34 minutes)

- Operational energy: 95% reduction during inference

- Task success rate: 95% versus 34% for conventional approaches

- Generalization: 78% success on unfamiliar complex task variants, compared to 0% for standard models

The combined efficiency gains amount to roughly a 100x reduction in energy consumption — all while dramatically outperforming the systems it was benchmarked against.

Why It Matters Beyond the Lab

The implications extend well beyond academic benchmarks. The neuro-symbolic approach also addresses a persistent weakness in large AI models: hallucinations and logical errors. By grounding decisions in symbolic rules, the system produces more reliable and interpretable outputs.

For the robotics industry specifically, this could be transformative. Energy-efficient models that generalize to new tasks without retraining from scratch would make autonomous robots far more practical in manufacturing, logistics, and household environments.

The Bigger Picture

The Tufts research arrives at a moment when the AI industry is under increasing scrutiny for its environmental footprint. While companies race to build ever-larger models and ever-bigger data centers, this work suggests that architectural innovation — not just scaling — may hold the key to sustainable AI development. Whether the approach scales to more complex real-world tasks remains to be seen, but the early results offer a compelling proof of concept that efficiency and capability need not be at odds.