Every few months, an AI lab claims its latest model is "approaching human-level reasoning." The ARC-AGI-3 benchmark just called their bluff — and the results are devastating.

The third iteration of the Abstraction and Reasoning Corpus, published by ARC Prize, tested every major frontier AI model on a set of visual pattern-matching and reasoning tasks. The results: not a single model broke 1%. Gemini 3.1 Pro led the pack with a score of 0.37%. The same tasks were solved by 100% of human participants on their first attempt.

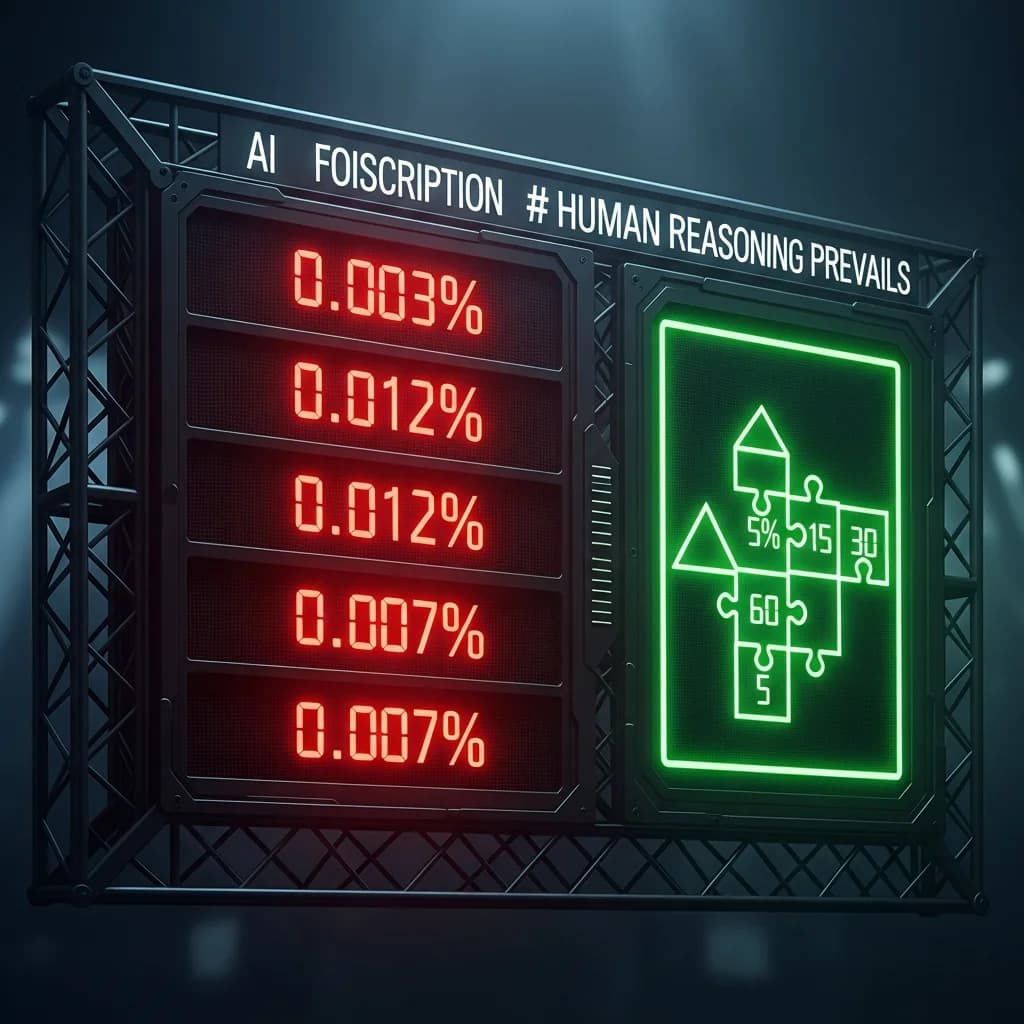

The Scores

The full leaderboard reads like a humiliation parade for the AI industry:

- Gemini 3.1 Pro: 0.37%

- Claude Opus 4: 0.29%

- GPT-5: 0.22%

- Llama 4 Behemoth: 0.14%

- Grok 4.2: 0.00%

To put this in perspective: every human test subject — regardless of age, education, or technical background — solved these problems correctly the first time they tried. The gap between human performance (100%) and the best AI performance (0.37%) isn't a gap. It's a chasm.

What ARC-AGI-3 Actually Tests

The ARC benchmark series, created by AI researcher and Keras creator François Chollet, is specifically designed to measure abstract reasoning — the ability to identify patterns in novel situations and apply flexible rules that were never seen during training.

Each task presents a small grid of colored cells with an input-output pattern. The test taker must figure out the underlying rule and apply it to a new input. The tasks are trivially easy for humans because they require the kind of basic abstraction that comes naturally to human cognition: spatial reasoning, object persistence, symmetry detection, and rule inference.

ARC-AGI-3 raises the difficulty from previous iterations by introducing more compositional reasoning — tasks that require chaining multiple abstract rules together. But "more difficult" is relative. Humans still found them straightforward.

What This Means for AGI Claims

The benchmark's creator has been blunt about the implications. Current AI models, regardless of scale, are fundamentally limited in their ability to reason abstractly about novel problems. They can simulate reasoning on tasks similar to their training data, but when confronted with genuinely new patterns, they collapse.

This directly challenges the narrative from major AI labs that scaling — more parameters, more data, more compute — is a reliable path to artificial general intelligence. ARC-AGI-3 suggests that the gap between statistical pattern matching and genuine reasoning isn't closing with scale. It may require entirely different approaches.

The Industry Response

AI labs have been notably quiet about ARC-AGI-3. None of the major model providers issued statements about the benchmark results. This silence contrasts sharply with the eager press releases that typically accompany favorable benchmark scores.

Some researchers have pushed back on the benchmark's relevance, arguing that abstract visual reasoning is just one dimension of intelligence and that current models excel at many tasks humans find difficult. That's true — but it misses the point. ARC-AGI-3 isn't measuring whether AI can do hard things. It's measuring whether AI can do easy things that require actual understanding.

The Uncomfortable Truth

ARC-AGI-3 doesn't prove AGI is impossible. It proves that we don't have it yet, and that the current paradigm of large language models isn't obviously converging toward it. The tasks that stump billion-dollar AI systems are the same ones a child can solve by looking at a picture for ten seconds.

That's not a benchmark failure. That's a reality check.