Microsoft Research published GigaTIME in Cell on December 9, 2025, a multimodal AI model developed in collaboration with Providence Health and the University of Washington. The model addresses a longstanding bottleneck in cancer research: the cost and complexity of advanced tumor imaging.

Turning Basic Slides Into Protein-Level Maps

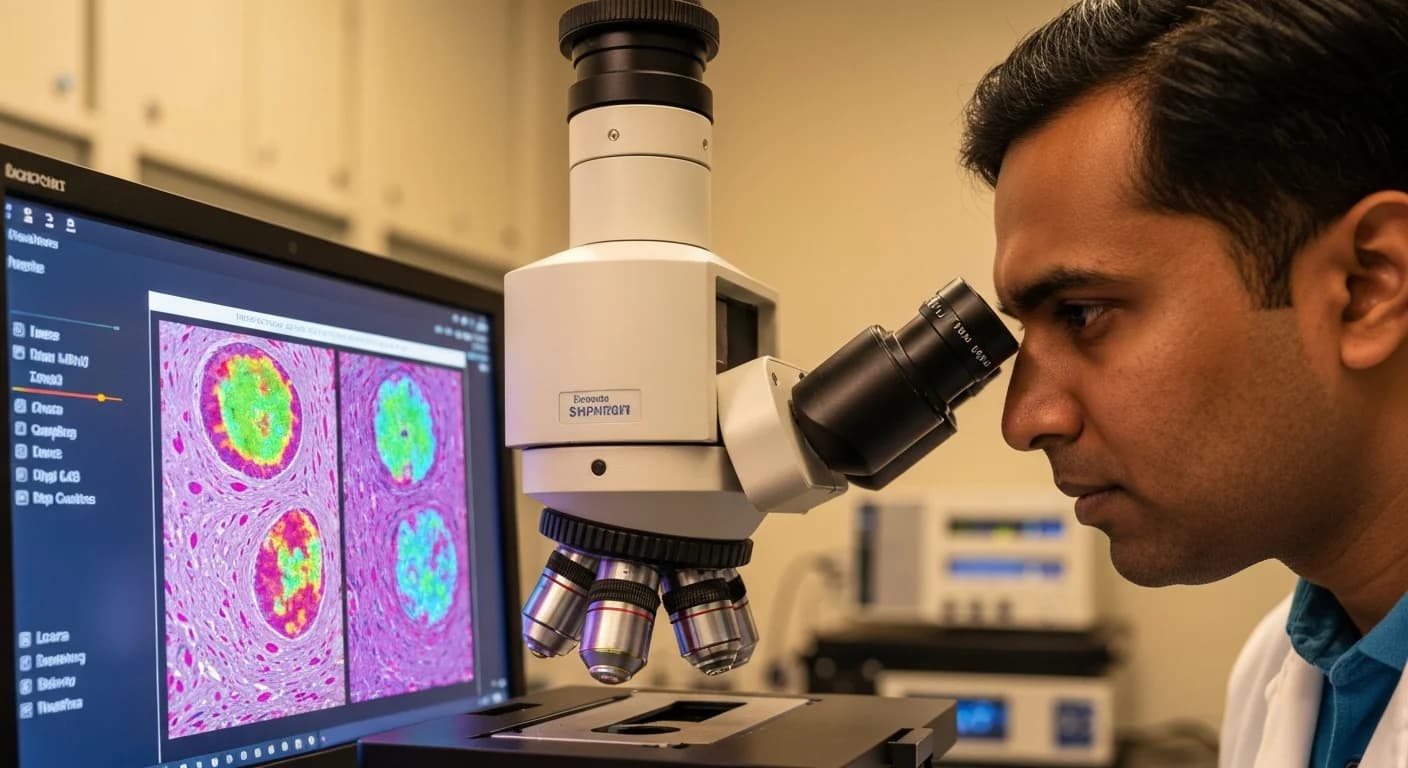

Standard hematoxylin and eosin (H&E) pathology slides are cheap, routine, and available at virtually every hospital. Multiplex immunofluorescence (mIF) imaging, which reveals the protein-level interactions between immune cells and tumors, is expensive, slow, and available only at specialized centers.

GigaTIME bridges this gap. The model takes a standard H&E slide and generates a virtual mIF image across 21 protein channels, effectively translating a $5 to $10 test into the informational equivalent of thousands of dollars worth of specialized imaging. This could dramatically expand access to advanced cancer diagnostics, particularly in community hospitals and developing regions that lack mIF infrastructure.

Massive Clinical Validation

The model was trained on a Providence dataset of 40 million cells with paired H&E and mIF images. Microsoft then applied GigaTIME at scale, analyzing slides from 14,256 cancer patients across 51 hospitals and more than 1,000 clinics within the Providence system.

The result was a virtual population of approximately 300,000 mIF images spanning 24 cancer types and 306 cancer subtypes. Analysis of this virtual population uncovered 1,234 statistically significant associations linking protein activations with clinical attributes such as biomarkers, tumor staging, and patient survival outcomes.

Open Access

In a move that could accelerate adoption, Microsoft has released GigaTIME publicly through Azure AI Foundry Labs and on Hugging Face. The open availability means research institutions worldwide can begin integrating the model into their oncology workflows without licensing barriers.

Why It Matters

Cancer treatment increasingly depends on understanding the tumor microenvironment — how cancer cells interact with the immune system at the molecular level. Until now, gaining this understanding required expensive equipment and specialized expertise that limited research to well-funded academic medical centers.

GigaTIME's ability to extract protein-level insights from routine pathology slides could democratize this capability. For clinical researchers, it opens the door to retrospective studies on existing slide archives. For patients, it may eventually mean more precise treatment decisions informed by deeper biological understanding of their specific tumor.

The model also demonstrates a growing pattern in medical AI: rather than replacing clinicians, the most impactful tools are those that make expensive diagnostic information accessible at commodity prices.