Alibaba's Qwen team has released Qwen 3.5-Omni, a native multimodal model that processes text, images, audio, and video within a single unified architecture. The model has attracted attention not just for its benchmark performance but for displaying an unexpected emergent capability: the ability to write functional code from spoken voice instructions and video input without being specifically trained to do so.

Architecture and Capabilities

Qwen 3.5-Omni uses a novel Thinker-Talker architecture with Hybrid-Attention Mixture of Experts across all modalities. The model comes in three sizes — Plus, Flash, and Light — with the flagship Plus variant supporting a 256,000-token context window. That is enough to process over ten hours of audio or more than 400 seconds of 720p video at one frame per second.

The model was pre-trained from the ground up on more than 100 million hours of audiovisual data, making it a truly native multimodal system rather than a text model with audio capabilities added afterward. It supports speech recognition across 113 languages and dialects, including 74 languages and 39 Chinese dialects.

The Emergent Surprise

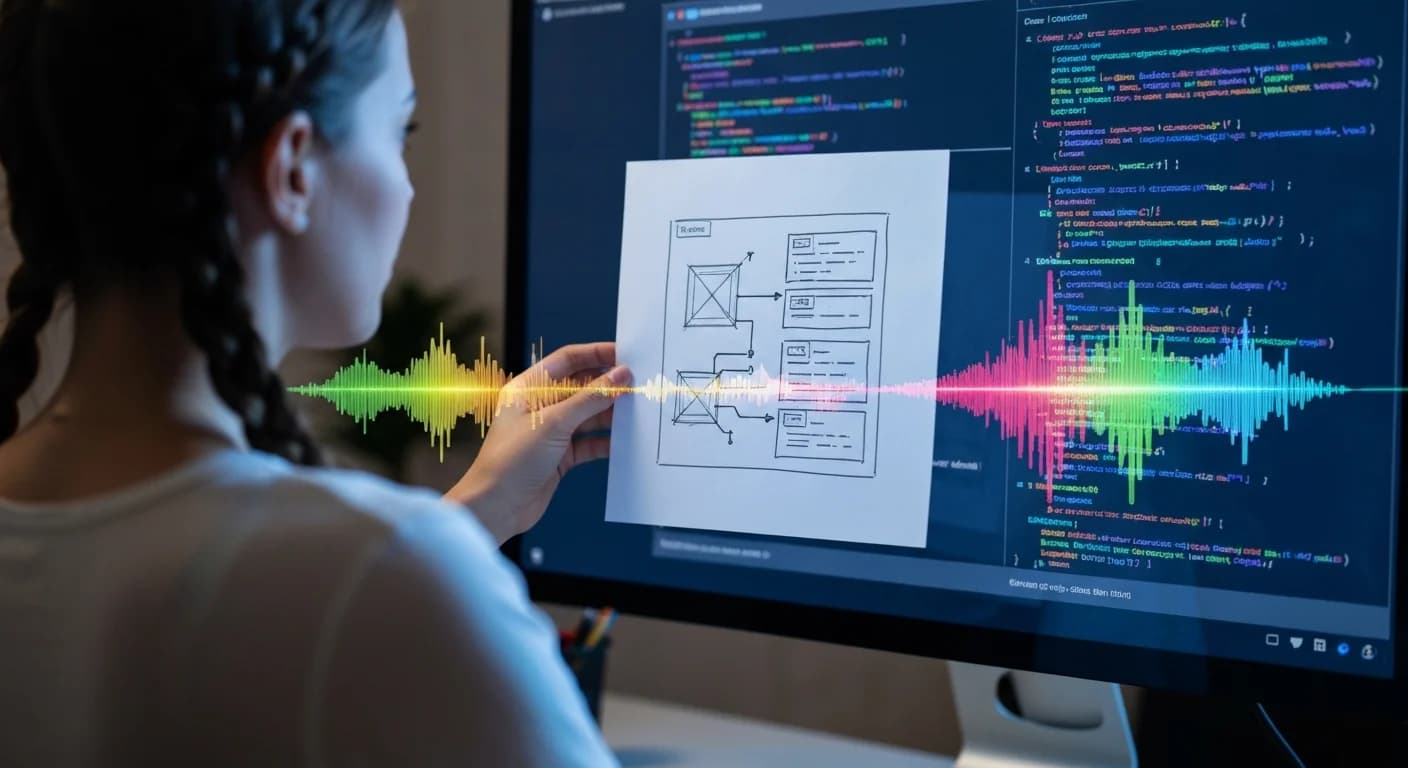

The most striking finding is what the Qwen team calls "audio-visual vibe coding." In tests, the model demonstrated the ability to take a hand-drawn sketch held up to a camera, listen to spoken instructions describing the desired behavior, and generate a working React webpage. The team reports that this capability was never explicitly trained — it emerged as a byproduct of scaling native multimodal processing.

As reported by The Decoder, this represents a meaningful step toward AI systems that can interact with developers the way a human collaborator would: by watching, listening, and understanding context simultaneously rather than relying solely on text prompts.

Benchmark Results

Qwen 3.5-Omni achieved state-of-the-art results on 215 audio and audio-visual understanding subtasks. According to Alibaba's technical reports, the Plus variant surpasses Google's Gemini 3.1 Pro in general audio understanding, reasoning, recognition, and translation, while achieving parity in audio-visual comprehension.

A Strategic Shift on Openness

Notably, Alibaba has broken from its established pattern of open-sourcing Qwen models. The most capable Qwen 3.5-Omni variants are closed-source and available only through API access, as reported by WinBuzzer. This marks a significant departure for a company that had built developer goodwill through consistent open releases.

The decision likely reflects the competitive and commercial pressures facing Chinese AI labs as their models approach frontier performance levels. With DeepSeek V4 expected to launch under Apache 2.0 in the coming weeks, Alibaba may be calculating that its most advanced capabilities are too valuable to give away.

What It Means

The emergence of untrained capabilities in multimodal models adds weight to the argument that scaling native multimodal training produces qualitatively different results from bolting modalities onto text-first systems. For developers, the practical implications are significant: if future models can reliably interpret spoken instructions paired with visual context, the interface for building software could shift dramatically from text-based prompting toward more natural, conversational interaction.