Grammarly, one of the most widely used writing tools in the world, found itself at the center of a controversy this week after its "Expert Review" feature was found to be using AI to simulate feedback attributed to real-life journalists and editors — without their knowledge or consent.

The feature, which was designed to give users editorial-style feedback on their writing, presented AI-generated critiques as if they came from named human experts. The individuals whose names and professional identities were used had not agreed to participate.

What Happened

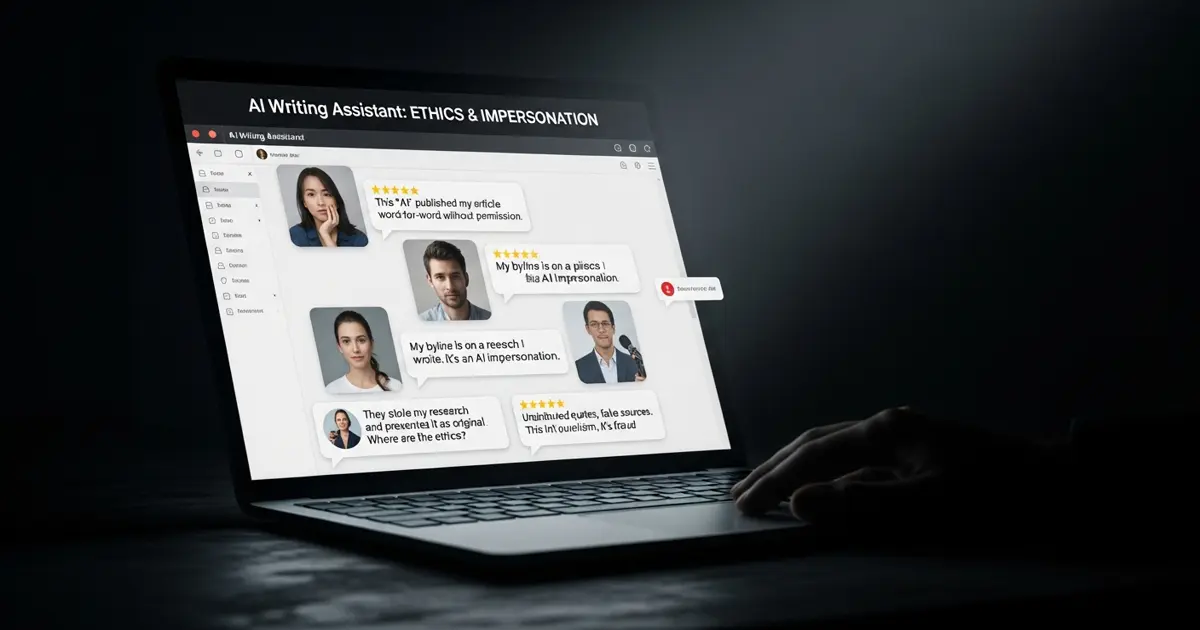

The "Expert Review" feature surfaced AI-generated writing feedback styled to appear as if it came from specific, real journalists. The implication — whether intentional or not — was that actual human experts were reviewing and commenting on user content. They weren't. The feedback was AI-generated, but the presentation borrowed real professional identities to lend it credibility.

The discovery drew immediate criticism. The core complaint wasn't about AI-generated feedback itself — it was about using real people's names and professional identities to represent something they had no part in producing.

Superhuman CEO Weighs In

Shishir Mehrotra, CEO of Superhuman (an email productivity tool with no direct connection to Grammarly), commented publicly on the controversy. He said the intention of the feature was not to impersonate real-life journalists — but notably stopped short of defending how it was actually executed.

That distinction matters. Intent and execution diverged significantly here. Whatever the product team had in mind, the result was a feature that attached real people's names to AI output without their consent.

Why This Matters

This is part of a broader pattern emerging as AI gets embedded into mainstream productivity tools. Companies are racing to add AI-powered features that feel authoritative and human — and sometimes the shortcuts taken to achieve that feeling cross ethical lines.

Using real names to give AI output a veneer of human expertise is a shortcut that:

- Violates consent — the people named didn't agree to have their professional identities used

- Misleads users — users may believe they're getting genuine human feedback when they're not

- Creates reputational risk — the named individuals could be associated with advice or critique they never gave

Grammarly has not yet issued a detailed public statement addressing the specific concern about real journalist identities being used.

The Bigger Picture

As AI writing tools become standard in professional workflows, the question of what constitutes genuine expert review versus AI simulation is increasingly important. Grammarly's misstep highlights what happens when that line gets blurred — not through malice, but through a product decision that wasn't thought through carefully enough.

The AI industry is going to have to get much more explicit about when users are interacting with AI-generated content versus human-produced expertise. Features that obscure that line — even unintentionally — are going to keep generating controversy.