Meta dropped a new version of one of the most useful open-source computer vision models in the field.

SAM 3.1 — the latest iteration of Meta's Segment Anything Model — is now available on Hugging Face and GitHub, with inference code, finetuning code, downloadable checkpoints, and example notebooks. The release also confirmed that SAM 3 technology is coming to Instagram Edits and Vibes in the Meta AI app.

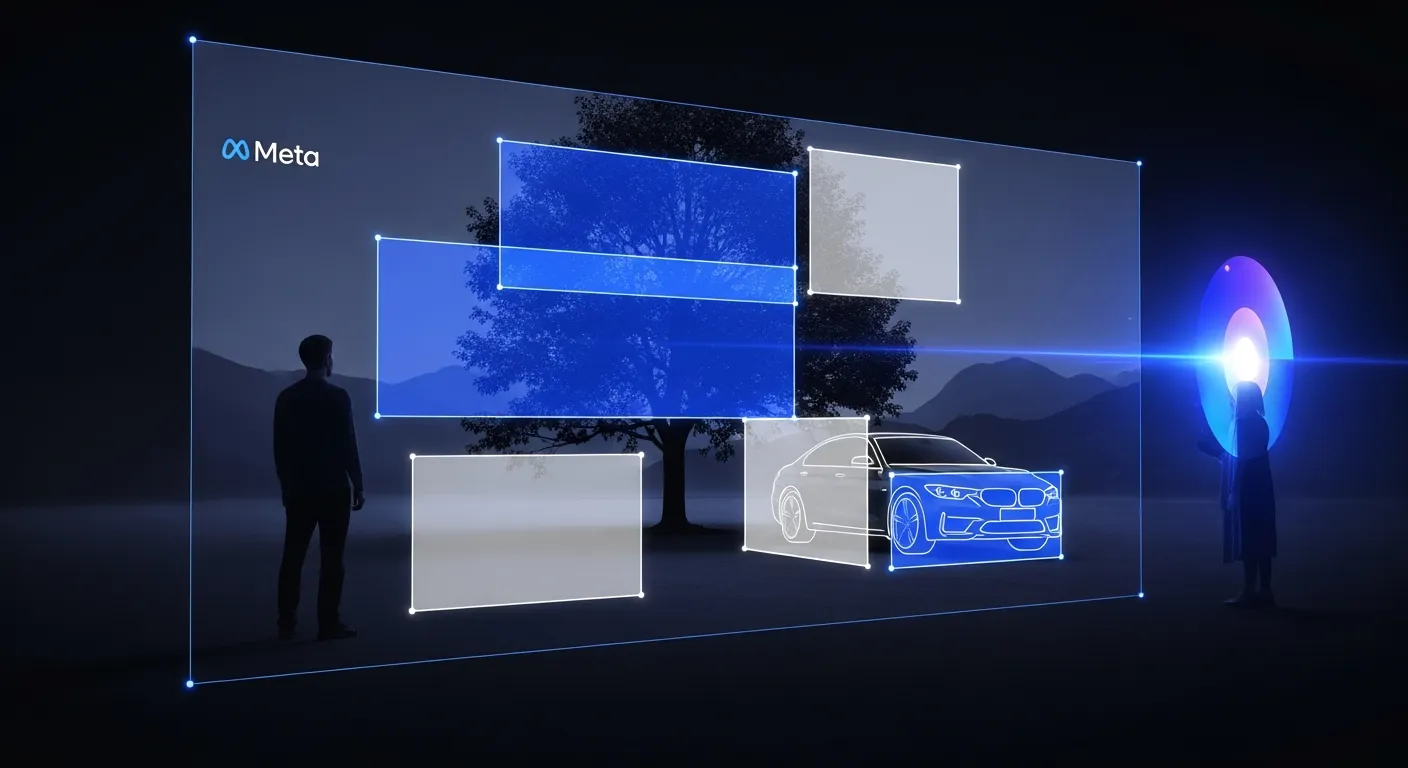

What SAM Does

Segment Anything is exactly what it sounds like. You point at something — with a click, a box, text, or another visual prompt — and SAM precisely outlines and isolates it. In images or video.

This is the kind of technology that makes "remove the background" buttons work well. It's what powers "select the person" in photo editors. SAM is the open-source version that anyone can build with, study, or improve.

What's New in 3.1

The headline addition in 3.1 is finetuning support. Previous releases included inference code — you could run the model — but 3.1 adds the training infrastructure to adapt it to new domains. Medical imaging, satellite imagery, industrial inspection, autonomous vehicles: any field with specialized segmentation needs can now finetune SAM 3 on domain-specific data.

That matters because "segment anything" works well for general consumer images, but specialized applications need a model that understands their specific objects, lighting conditions, and contexts. Finetuning support turns SAM from a useful tool into a foundation model that specialists can actually build on.

The Consumer Application

Meta confirmed that SAM 3 is headed to Instagram Edits and Vibes — the company's consumer video and photo editing surfaces. For most users, that means better object selection, cleaner cutouts, and more accurate background editing in Instagram's native tools.

It's also a significant distribution play: SAM's capabilities will reach billions of users through apps they're already using, not just developers who build with the API.

Why Open-Source Matters Here

Meta has been consistently open-sourcing its computer vision and AI research. SAM, Llama, and related models have become foundational pieces of the AI ecosystem precisely because they're available to build with, study, and improve.

SAM 3.1's finetuning release extends that pattern. Instead of keeping the best capabilities inside Meta products, the company publishes the tools that let the broader research and developer community push the technology further — and build applications Meta's own teams haven't imagined yet.