OpenAI's Codex just got meaningfully more powerful for production engineering teams.

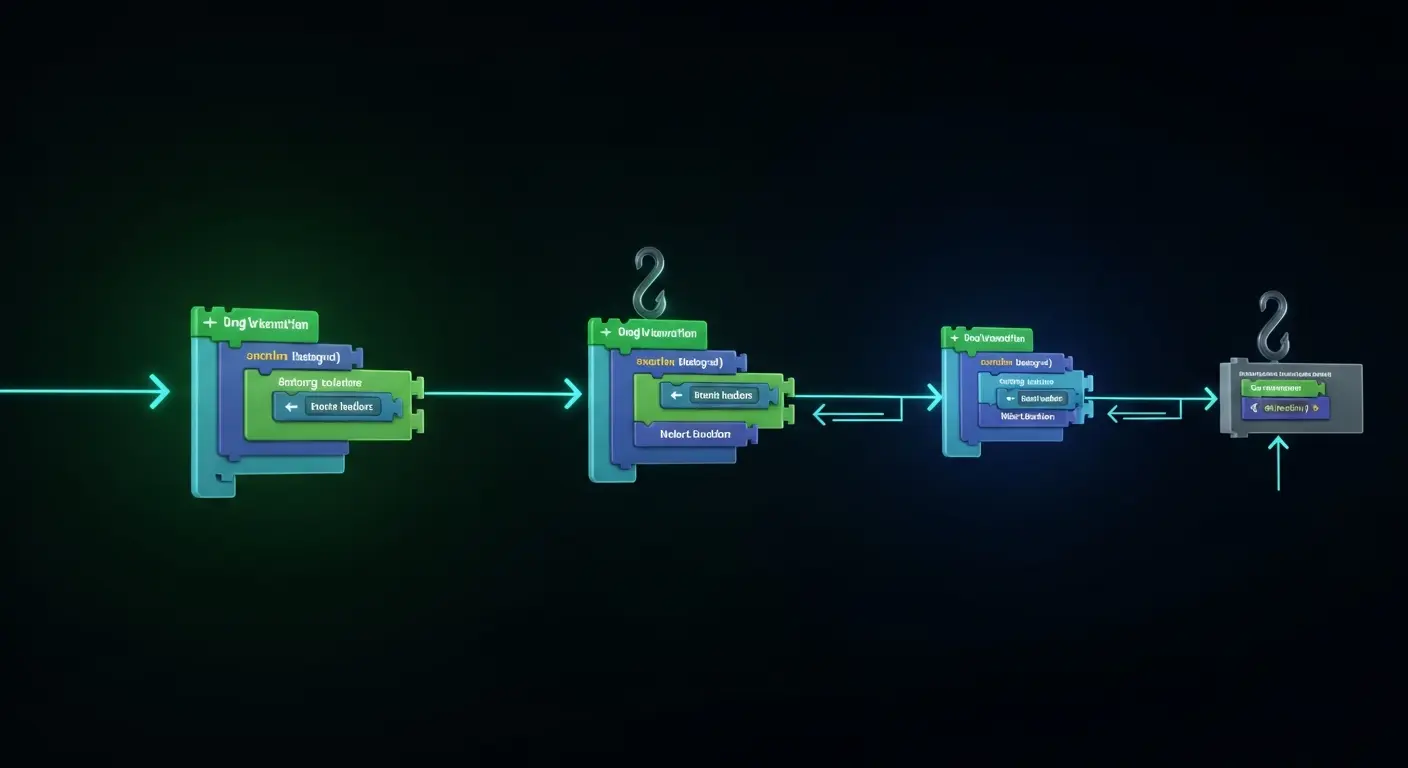

The company shipped Codex Hooks this week — a feature that lets developers run deterministic scripts at specific points in the Codex task lifecycle. The key moments: before a task executes (pre-hook) and after it completes (post-hook).

It's a small feature with large implications for how teams actually use AI in engineering workflows.

What Hooks Do

The core use case is quality gates. When Codex completes a coding task, a post-hook can automatically run your test suite. If tests fail, the hook can flag it, modify the output, or trigger a retry — all without a developer manually checking.

Pre-hooks work the other way: you can validate the task or the environment before Codex starts, ensuring preconditions are met.

This turns Codex from a tool you interact with into a component in an automated pipeline. That's a fundamentally different product.

Why This Matters for Engineering Teams

The biggest friction in deploying AI-generated code at scale isn't writing the code — it's trusting it. Every AI coding tool generates bugs, and those bugs still have to be caught by someone.

Hooks let you automate the catching. Instead of relying on a developer to review every AI output, you can define a script that checks it for you. A test that passes is a signal the code is functional. A lint check that passes is a signal it meets style requirements.

This doesn't eliminate code review, but it creates a floor: code that fails automated checks never makes it out of the pipeline.

Part of a Bigger Codex Push

OpenAI published a Codex use cases guide alongside the Hooks launch, covering example workflows, team setups, and task types. The combination suggests OpenAI is positioning Codex less as a demo tool and more as serious infrastructure for engineering teams.

The timing makes sense. Claude Code has been gaining traction as an agentic coding tool, and GitHub Copilot has deepened its enterprise relationships. Hooks are OpenAI's answer: a feature that makes Codex more deployable at scale, not just more impressive in demos.

For teams already using Codex in their pipelines, this is a direct upgrade. For teams evaluating AI coding tools, it's worth paying attention to — automated lifecycle scripts are the kind of thing that separates a toy from a tool.