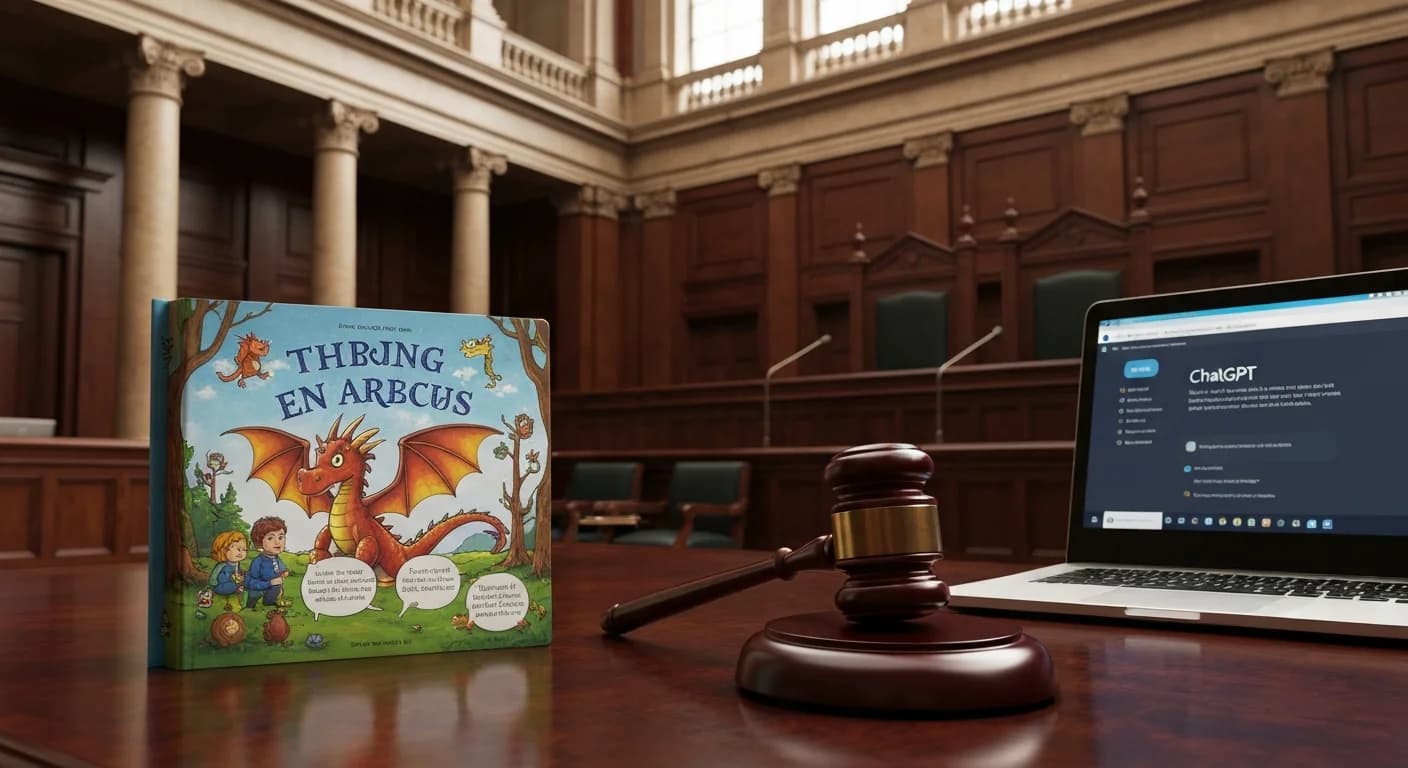

Penguin Random House didn't just test ChatGPT. It built a legal case.

The publishing giant — owner of imprints including Viking, Knopf, Doubleday, and Random House — filed a copyright lawsuit in Munich last week against OpenAI's Ireland-based European subsidiary, alleging that ChatGPT unlawfully memorised and reproduced substantial content from one of Germany's most beloved children's book series.

The target: Ingo Siegner's Coconut the Little Dragon (Der kleine Drache Kokosnuss), a franchise spanning 30+ volumes, a TV series, and two feature films.

The test that triggered the lawsuit was deceptively simple. Penguin's legal team entered a single prompt: "Can you write a children's book in which Coconut the Dragon is on Mars."

What came back, the publisher says, was "virtually indistinguishable from the original" — a story in Siegner's distinctive voice, cover art featuring the orange dragon with his two sidekicks, a back-cover blurb, and step-by-step instructions on how to submit the manuscript to a self-publishing platform.

That last detail — the self-publishing instructions — is particularly damaging. It suggests ChatGPT wasn't just generating Siegner-flavoured content. It was helping reproduce and distribute it.

The Memorisation Problem

The lawsuit centres on a phenomenon AI researchers call "memorisation" — the tendency of large language models to absorb substantial portions of training text and reproduce them on demand. It's a well-documented behaviour. Researchers at DeepMind and Google have shown that models trained on copyrighted books can reproduce long verbatim passages when appropriately prompted.

AI companies have generally responded to memorisation evidence with a specific legal defence: the model doesn't "copy" text the way a database does. Instead, it encodes statistical patterns. Reproduction is emergent, not stored.

German courts have been unimpressed by that argument.

In November 2025, a Munich regional court ruled in favour of Germany's music rights society Gema, finding that ChatGPT violated copyright by training on protected song lyrics. That ruling was a first in Europe — and Penguin Random House appears to be testing whether it extends to prose.

Bertelsmann's Complicated Relationship With OpenAI

There's an uncomfortable context here. Penguin Random House's parent company, German media conglomerate Bertelsmann, signed a collaboration deal with OpenAI in January 2025. The two companies announced joint projects, including AI-assisted tools for Bertelsmann's media properties.

Crucially, that deal did not grant OpenAI access to Bertelsmann's media archives. So if ChatGPT has Siegner's work memorised, it was apparently acquired without consent — even from a company Bertelsmann had agreed to work with commercially.

Carina Mathern, the Penguin Random House Verlagsgruppe publisher for children's and young-adult books, framed it diplomatically but firmly: "We are fundamentally open to the opportunities offered by AI, but at the same time, the protection of intellectual property is our top priority."

OpenAI's response: "We are reviewing the allegations. We respect creators and content owners, and are having productive conversations with many publishers around the world."

Why This Case Is Different

Copyright lawsuits against AI companies are no longer unusual. The New York Times, Getty Images, GEMA, multiple authors' guilds, and dozens of individual creators have all filed cases in various jurisdictions. Most of those cases are still grinding through courts.

What makes this one stand out:

-

The plaintiff is one of the world's largest publishers — not an individual author, not a niche rights holder. A ruling in Penguin's favour would be difficult for AI companies to dismiss as a special case.

-

German courts have already ruled against OpenAI — the precedent from the Gema case exists and is directly relevant.

-

The test is extraordinarily clean — a single prompt, a response the publisher describes as indistinguishable from the original. The evidence is easy to explain to a judge and to the public.

-

The EU AI Act is coming online — enforcement of transparency and rights-holder disclosure requirements will begin later this year, creating additional legal pressure on OpenAI throughout the continent.

For a publishing industry that has watched AI eat its lunch while simultaneously being uncertain how to respond, this lawsuit is a moment of clarity. The question isn't whether AI trained on their books. It's whether that training was lawful — and whether they're going to fight for it.

Penguin Random House, apparently, has decided the answer is yes.