Amazon has entered the frontier AI race with Olympus, a 2-trillion parameter model that the company claims is the largest and most capable AI system ever built. The announcement, made at a surprise event at AWS headquarters in Seattle, signals Amazon's intent to compete directly with OpenAI, Anthropic, and Google at the highest tier of AI capability.

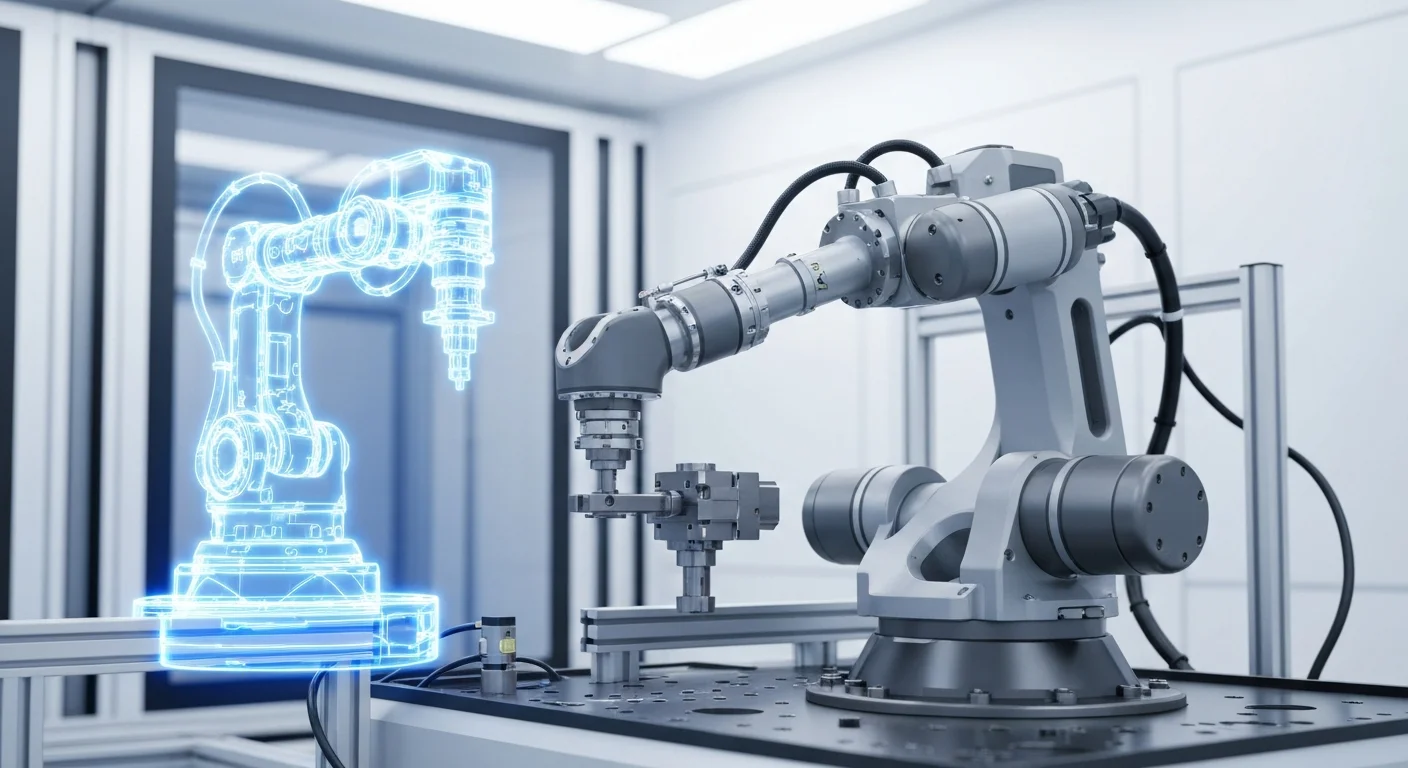

Built on Custom Silicon

The most significant detail is not the model's size but how it was trained. Olympus was built entirely on Amazon's third-generation Trainium chips, which the company designed specifically for large-scale AI training. Amazon deployed over 100,000 Trainium3 chips across multiple AWS regions for the training run, which took approximately four months.

This is a strategic move. By training on its own silicon, Amazon avoids the NVIDIA dependency that constrains most AI companies. It also creates a compelling story for AWS customers: the same infrastructure that trained the world's largest model is available for rent.

"We built Olympus to prove that AWS infrastructure can train frontier models," said Andy Jassy, Amazon CEO. "Every AWS customer now has access to the same compute that built this."

Capabilities and Benchmarks

Amazon shared internal benchmark results showing Olympus outperforming GPT-5 on enterprise-focused tasks including document analysis, financial reasoning, and structured data extraction. On coding benchmarks, it matches Claude Opus scores on SWE-bench and HumanEval. On general knowledge and conversational quality, Amazon positioned it as competitive but did not claim outright leadership.

The model supports a 256,000-token context window and native multimodal input including text, images, and documents. Amazon emphasized its strength in enterprise use cases — processing contracts, analyzing spreadsheets, summarizing regulatory filings — rather than consumer-facing capabilities.

Alexa Ultra

Olympus powers a new premium tier of Alexa called Alexa Ultra, priced at $19.99 per month. Unlike the current Alexa Plus, which uses a smaller model, Alexa Ultra can handle multi-step tasks: booking travel while cross-referencing calendar conflicts, comparing product specifications across multiple retailers, and managing smart home routines through natural conversation.

Amazon demonstrated Alexa Ultra planning a full dinner party — ordering groceries from Whole Foods, adjusting smart lighting schedules, creating a Spotify playlist based on guest preferences, and sending calendar invitations — all from a single voice request.

Developer Access

For developers, Olympus is available immediately through Amazon Bedrock. Pricing starts at $15 per million input tokens and $45 per million output tokens — roughly 40% cheaper than comparable GPT-5 pricing, which Amazon attributes to Trainium3 efficiency.

Amazon is also launching a 90-day free preview with generous usage limits to drive adoption. The company said fine-tuning support will be available within 60 days.

Market Implications

The announcement reshapes the competitive landscape. Amazon has historically been a platform provider for other companies' models through Bedrock. With Olympus, it becomes a direct competitor to the models it hosts. How Anthropic and Meta — both significant Bedrock partners — respond to this shift will be closely watched.

Wall Street reacted positively. Amazon shares rose 4.2% in after-hours trading following the announcement.