Micron Technology delivered fiscal second-quarter results that obliterated Wall Street expectations, underscoring the extraordinary demand for memory chips driven by the global AI infrastructure build-out.

The Boise, Idaho-based chipmaker reported revenue of $23.86 billion for the quarter ended in early March, a staggering 196% increase from the same period a year ago. Adjusted earnings per share came in at $12.20, well above the $9.31 consensus estimate. GAAP gross margin hit 74.4%, reflecting both tight supply conditions and premium pricing on advanced memory products.

A Forecast That Stunned Analysts

Perhaps more striking than the backward-looking numbers was Micron's forward guidance. The company projected third-quarter revenue of approximately $33.5 billion — plus or minus $750 million — compared with a Wall Street consensus of just $24.29 billion. Adjusted earnings per share are expected to reach roughly $19.15.

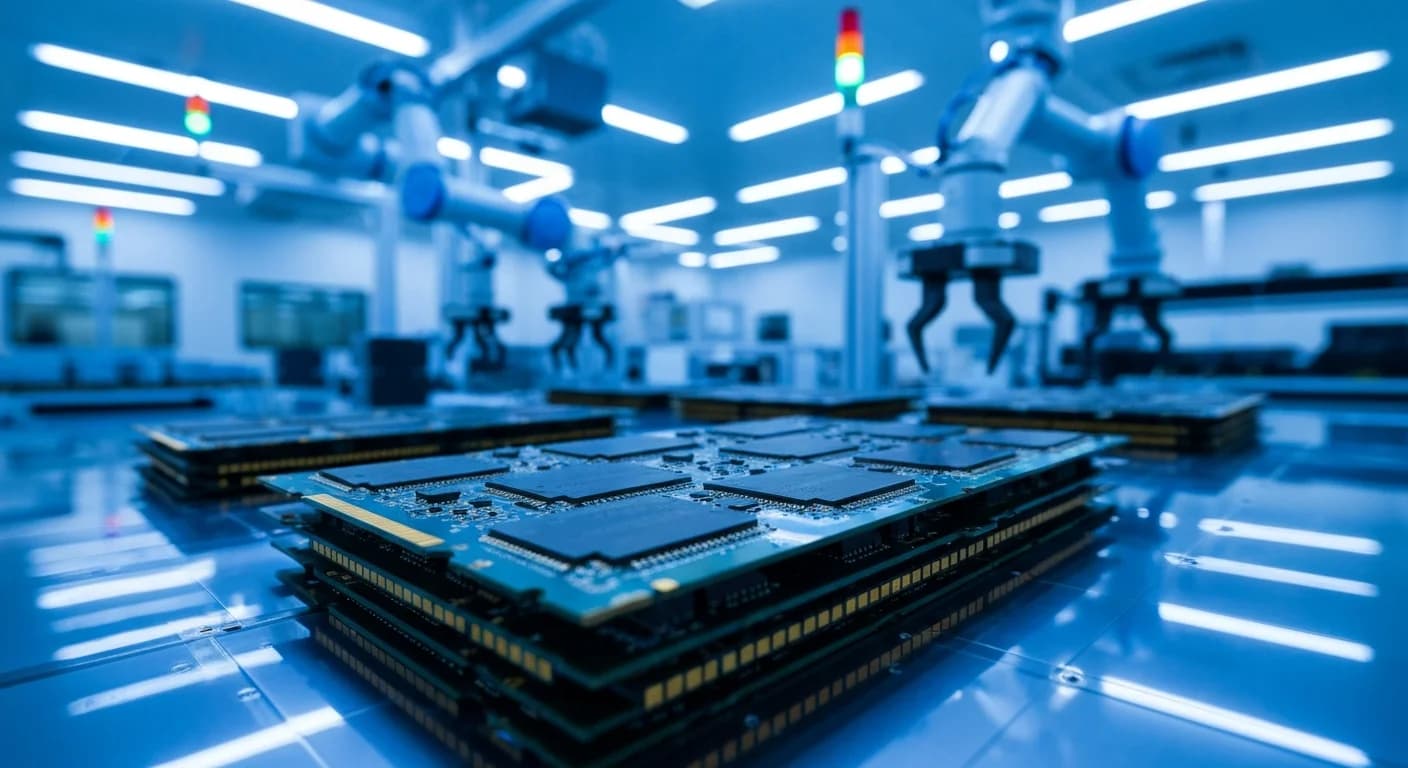

To support the surge, Micron raised its fiscal 2026 capital expenditure plan to $25 billion, up $5 billion from its prior target of $20 billion. The additional spending is aimed squarely at expanding production of high-bandwidth memory (HBM) and advanced DRAM nodes.

HBM: Sold Out and Priced to Match

The star of Micron's earnings story is HBM — the vertically stacked memory modules that sit alongside GPUs in AI accelerators from NVIDIA and AMD. Micron confirmed that its entire calendar-year 2026 HBM supply is spoken for, with price and volume locked in across all customers.

The company now expects the total addressable market for HBM to reach approximately $100 billion by 2028, growing at a compound annual rate of roughly 40% from $35 billion in 2025. Notably, that $100 billion milestone is projected to arrive two years earlier than Micron's previous estimate.

Cloud and Data Center Demand

Micron's cloud memory business alone generated $7.75 billion in Q2 revenue, a jump of more than 160% year-over-year. Data center DRAM and NAND bits TAM is expected to exceed 50% of industry TAM for the first time in calendar 2026, and AI servers are on track to account for over 60% of worldwide consumption by 2030.

What It Means for the AI Economy

Micron's results serve as a barometer for the broader AI hardware supply chain. While GPU makers like NVIDIA capture headlines with trillion-dollar order forecasts, memory remains a critical — and increasingly scarce — bottleneck. Every AI training run and every inference query depends on fast, high-capacity memory, and Micron's numbers suggest demand is outpacing even aggressive capacity expansion plans.

The stock, which has risen more than 350% over the past year, slipped modestly in after-hours trading as investors digested the higher capital spending commitments. But the message from Micron's management was clear: the AI memory supercycle is accelerating, not plateauing.